The rapid evolution of generative artificial intelligence has fundamentally altered the threat landscape, turning sophisticated security protocols that once felt impenetrable into vulnerable targets for state-sponsored actors. Threat intelligence researchers have identified a sharp increase in the deployment of large language models to automate the exploitation of complex software vulnerabilities, marking a transition where AI is no longer just a supplementary tool but a core engine for offensive digital operations. These attackers are utilizing specialized algorithms to refine phishing lures, generate modular malware, and systematically identify zero-day weaknesses with a level of precision that traditional manual coding simply cannot match. By leveraging the immense processing power of modern AI, hostile groups can now conduct exhaustive research into firmware and embedded systems, allowing them to uncover hidden entry points that previously required months of human analysis. This shift represents a significant escalation in the ongoing struggle for digital sovereignty, as automated systems allow for the scaling of attacks to a global level while maintaining a high degree of customization for specific high-value targets.

Sophisticated Methods for Circumventing Authentication Protocols

Recent intelligence reports highlight a concerning trend where advanced persistent threat groups, particularly those linked to China and North Korea, utilize Python-based scripts to systematically bypass two-factor authentication. Unlike traditional hacking methods that rely on identifying simple technical bugs or software glitches, these AI-driven campaigns focus on exploiting architectural design flaws within the authentication process itself. This means that even if a user possesses valid login credentials and a secondary verification method, the underlying logic of the session management system can be manipulated to grant unauthorized access. Attackers are training their models on massive repositories of historical security data to recognize specific patterns in how different platforms handle user sessions and token exchanges. By doing so, they can predict how a security system will react to certain stimuli and inject malicious code that mimics legitimate user behavior. This level of mimicry makes it exceptionally difficult for standard monitoring tools to distinguish between a genuine login attempt and a sophisticated AI-driven intrusion.

Beyond the initial breach, the use of AI allows these actors to maintain a persistent presence within a network by generating what are known as mutating payloads. These dynamic scripts are designed to alter their own code structure in real-time, effectively masking their signature from signature-based antivirus software and behavior-based detection systems. When a payload enters a target environment, the AI engine can analyze the local security posture and adjust its execution strategy to remain stealthy. For example, if a system begins a memory scan, the malware may temporarily suspend its activities or relocate its processes to a different part of the infrastructure to avoid detection. This capability creates a high-stakes environment where attackers can issue live, context-aware commands to compromised systems. The integration of such technology into state-sponsored operations suggests that the goal is no longer just immediate data theft, but rather the establishment of long-term, invisible conduits for intelligence gathering and potential kinetic disruption of critical infrastructure.

Proactive Defense Mechanisms and Automated Vulnerability Patching

To counter the rise of automated threats, the security community has begun deploying a new generation of defensive technologies that prioritize autonomous response and predictive analysis over reactive measures. Systems such as Big Sleep and CodeMender represent the cutting edge of this shift, functioning as digital sentinels that proactively scan internal codebases to find and repair vulnerabilities before they are discovered by hostile actors. These tools utilize machine learning to simulate thousands of potential attack scenarios against a piece of software, identifying weak points in the logic that human developers might have overlooked during the initial build phase. Once a flaw is detected, the AI can automatically generate a patch and test it for compatibility, significantly reducing the window of opportunity for an exploit. This transition toward self-healing infrastructure is a direct response to the speed at which AI-powered hackers now operate, acknowledging that human-led security teams can no longer keep pace with the sheer velocity of modern automated reconnaissance and deployment.

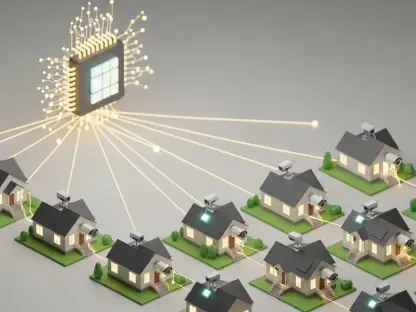

Furthermore, the integration of advanced safeguards into widely used consumer and enterprise platforms is becoming a critical layer of the global defense strategy. Modern AI models are being trained to monitor the entire user ecosystem for subtle indicators of compromise that often precede a major breach, such as unusual API call patterns or minor deviations in administrative account behavior. By analyzing these signals in aggregate, defensive AI can flag a potential state-sponsored campaign in its early stages, allowing for the preemptive revocation of tokens or the implementation of stricter verification requirements for high-risk regions. This creates a feedback loop where every thwarted attack contributes to the intelligence of the defensive model, making the system more resilient against future iterations of the same threat. As the industry moves further into this era of automated conflict, the success of digital security will depend heavily on the ability of these defensive systems to maintain an analytical advantage over the increasingly creative and well-funded operations of state-aligned adversaries.

Strategic Realignment for Future Security Resilience

The transition toward an environment where artificial intelligence serves as both the primary weapon and the principal shield necessitates a fundamental rethink of how organizational security is structured and maintained. Moving forward, it was clear that relying solely on traditional static passwords or even standard multi-factor authentication was insufficient to deter highly motivated state actors with access to unlimited computing power. Organizations shifted their focus toward adopting zero-trust architectures that require continuous validation of every device and user session, regardless of their location or previous history. This approach ensured that even if a single authentication factor was bypassed through AI manipulation, the intruder would immediately face additional, context-aware hurdles that are much harder to automate against. Implementing hardware-based security keys and biometric verification as the primary standards became the norm, as these physical layers are significantly more difficult for remote AI agents to simulate or intercept than SMS codes or software-based tokens.

Looking ahead to the next phase of digital protection, the primary takeaway was the necessity of collaborative intelligence sharing across the private and public sectors. Since state hackers often use the same AI frameworks to target multiple industries, a vulnerability discovered in one sector often serves as a warning for others. Establishing real-time data pipelines that allow different entities to share anonymized threat signatures enabled a collective defense that far exceeded the capabilities of any single organization. Security professionals were encouraged to invest heavily in red-teaming exercises that utilized offensive AI tools to stress-test their own systems, thereby identifying logical gaps before an actual adversary could exploit them. This proactive stance, combined with the deployment of autonomous patching agents, created a robust framework for long-term digital resilience. Ultimately, the industry learned that the only way to effectively combat the ingenuity of AI-powered attackers was to outpace them through superior defensive automation and a relentless commitment to architectural transparency.