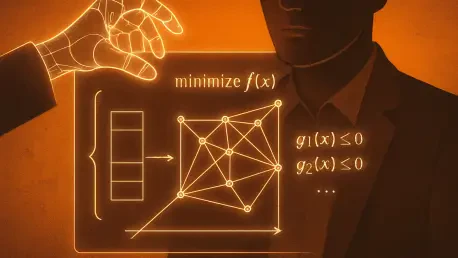

The invisible hand guiding our modern world no longer belongs to a human economist but to a complex set of mathematical instructions that dictate everything from the speed of a medical response to the efficiency of a global power grid. As these optimization systems become more deeply embedded in the foundational layers of society, the traditional “black-box” approach—where a machine provides a result without any explanation of its logic—has moved from being a technical quirk to a systemic liability. This review examines the emergence of transparent optimization algorithms, a critical technological shift designed to ensure that as our infrastructure becomes more automated, it also remains inherently understandable and accountable to the people who manage it.

The Evolution of Algorithmic Transparency in Optimization

The transition toward transparency represents a fundamental departure from the legacy models of the early 2020s, which prioritized raw computational speed over interpretability. Historically, optimization was viewed as a closed-loop mathematical exercise: an algorithm would ingest constraints like time, cost, and distance to spit out a single “optimal” path. However, this efficiency often came at the cost of context. In high-stakes environments, such as managing a city’s emergency services, a human supervisor needs to know why a specific route was chosen, especially if that choice seems to contradict local intuition or immediate safety concerns.

This evolutionary pressure has forced a merger between operations research and Explainable AI. The goal is no longer just to find the best solution, but to provide a “traceable” logic path. This shift is driven by the realization that in 2026, the bottleneck for AI adoption is no longer processing power, but trust. By opening the “black box,” developers are creating a framework where the machine serves as a sophisticated advisor rather than an opaque oracle, bridging the gap between cold mathematical certainty and the nuanced requirements of real-world human oversight.

Core Components of Explainable Optimization

Machine Learning for Decision Decoding

Modern transparent optimization utilizes machine learning not to perform the calculation itself, but to interpret the results of complex mathematical models. This “meta-layer” of AI analyzes thousands of potential solutions to identify the structural patterns that led to the final choice. By doing so, the system can pinpoint exactly which variables—such as a sudden change in weather or a minor supply chain delay—pushed the algorithm toward one decision over another. This is unique because it treats the optimization process as a data source to be mined for insights, rather than just a calculation to be performed.

Human-Readable Reasoning and Plain-Language Output

A primary breakthrough in this field is the ability to translate “shadow prices” and “multi-dimensional constraints” into sentences that a human can actually use. When an algorithm manages a complex problem like the “Knapsack problem”—deciding which items to include in a limited space—it can now explain its trade-offs. Instead of a spreadsheet of coordinates, a logistics manager receives a report stating that specific cargo was excluded because prioritizing battery life for a return flight was deemed more critical than maximizing total payload. This performance characteristic transforms the operator from a passive observer into an informed decision-maker.

The Collaborative Audit Layer

Rather than attempting to rebuild established optimization software from scratch, transparency is often implemented as a modular “audit layer.” This component runs parallel to the primary system, flagging “brittle” decisions—scenarios where a tiny, one-percent change in a single variable would have resulted in a completely different outcome. By highlighting these sensitivities, the audit layer provides a safety net. It allows humans to see where the machine’s logic is most vulnerable to real-world volatility, ensuring that the chosen solution is not just mathematically optimal, but practically robust.

Innovations and Shifting Industry Trends

The current trajectory of the industry is defined by a move toward “explainable autonomy.” As we see more autonomous drones and self-driving delivery fleets, the demand for auditable logic has become a regulatory necessity. Developers are moving away from total machine independence, favoring systems that allow for seamless human-in-the-loop intervention. This trend reflects a broader maturity in the tech sector: a recognition that the most powerful systems are those that can justify their actions to a legal or ethical board.

Real-World Applications and Sector Deployment

Critical Infrastructure and Emergency Services

In the realm of emergency response, where seconds literally equate to lives, transparent algorithms allow dispatchers to validate AI-suggested routes instantly. If an algorithm suggests a longer path for an ambulance, the transparency layer can explain that the shorter route is currently obstructed by unmapped utility work or extreme congestion. This insight prevents the “blind trust” that has led to navigation errors in the past, allowing human expertise to override the machine when the machine’s data is incomplete.

Logistics and Autonomous Delivery

In global supply chains, these algorithms are being used to balance ethical constraints with profit margins. Companies can now set specific “fairness” or “sustainability” parameters and see exactly how those settings impact the final delivery schedule. For drone logistics, this means a supervisor can see if a drone is taking a less efficient route specifically to avoid noise-sensitive residential areas, ensuring that the company’s automated operations remain compliant with both local regulations and corporate social responsibility goals.

Technical Hurdles and Regulatory Obstacles

Despite the progress made by 2026, significant hurdles remain. Generating these explanations requires additional computational overhead, which can be a drawback in millisecond-sensitive environments like high-frequency trading or real-time grid balancing. Furthermore, there is no universal standard for what a “sufficient” explanation looks like. Regulators are currently grappling with how to define transparency in a way that is legally defensible without stifling the very innovation that makes these algorithms useful in the first place.

Future Outlook and Long-Term Impact

The future of these systems lies in a more conversational interaction between humans and machines. We are approaching a point where a technician might “debate” an optimization engine, asking “what if” questions to explore different risk profiles in real-time. This will eventually lead to a state where the accountability gap is closed entirely. As we delegate more authority to autonomous systems, the human element will shift from micromanaging tasks to defining the ethical and strategic boundaries within which the machine operates, creating a more resilient and transparent societal infrastructure.

Summary of Algorithmic Oversight and Impact

The adoption of transparent optimization algorithms marked a turning point in the relationship between human intuition and machine efficiency. By prioritizing interpretability, these systems successfully moved beyond the limitations of opaque “black-box” modeling, providing the clarity needed for high-stakes decision-making. The implementation of audit layers and natural-language reasoning essentially restored the human element to the center of automated infrastructure. This shift ensured that efficiency never came at the expense of accountability. Looking forward, the focus must remain on standardizing these transparency frameworks across international borders. As these technologies continue to evolve, they will likely become the mandatory baseline for any AI system that impacts public safety or economic stability, finally harmonizing the speed of the algorithm with the responsibility of the human supervisor.