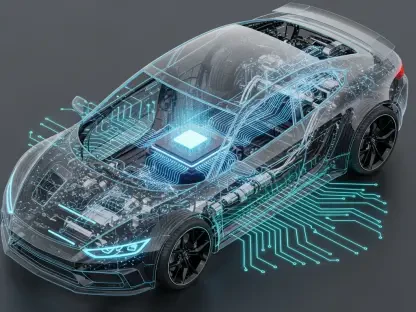

The traditional reliance on massive, centralized data centers is rapidly giving way to a more agile and distributed architecture that places computational power directly at the network’s periphery. As the global tally of connected devices is projected to surpass thirty billion by the end of 2027, the limitations of standard cloud models have become increasingly apparent, particularly regarding latency and bandwidth consumption. Modern infrastructure now requires a “data-first” philosophy where information is processed at the source, rather than being funneled through congested long-distance pipelines to a distant server. This architectural pivot is not merely a technical preference but a strategic necessity for industries ranging from autonomous logistics to precision healthcare. By localizing logic and storage, organizations effectively eliminate the bottleneck of high-latency connections, allowing for a seamless integration of digital intelligence into the physical world. The result is a more resilient ecosystem that can maintain operational continuity even when external network connections falter, fundamentally redefining the reliability of the Internet of Things.

Securing the Digital Frontier Through Decentralized Intelligence

Federated learning is revolutionizing how artificial intelligence operates within the industrial landscape by allowing massive clusters of devices to train sophisticated models without ever exposing raw, sensitive data. In this decentralized framework, individual sensors and controllers perform local computations on the data they harvest, transmitting only the refined mathematical gradients or encrypted parameters to a global aggregator. This method effectively neutralizes the “honeypot” risk associated with centralized cloud databases, where a single breach could compromise the entire information repository of an international enterprise. By keeping the actual data points on-site, businesses can leverage the collective intelligence of thousands of nodes while maintaining a rigorous security posture. This localized approach also facilitates a much faster iteration cycle for machine learning models, as updates can be validated against real-world conditions in real-time rather than waiting for massive batch uploads to conclude.

Beyond the theoretical benefits of privacy, the proximity of processing power at the edge enables a level of advanced real-time analytics that traditional cloud frameworks simply cannot sustain. In high-speed environments, such as semiconductor fabrication plants or automated sorting facilities, even a microsecond of delay can lead to significant financial loss or mechanical damage. Localized edge nodes provide the necessary throughput to analyze high-definition video feeds or vibration sensors at the millisecond level, identifying anomalies the moment they occur. This immediate responsiveness ensures that production lines can be halted or adjusted autonomously, preventing minor glitches from cascading into catastrophic failures. Furthermore, by filtering out the vast majority of routine, non-essential data at the source, edge systems drastically reduce the financial burden of cloud storage and egress fees. This creates a lean, high-performance data pipeline that prioritizes actionable insights over the mindless collection of digital noise.

Navigating Global Compliance and Data Governance

Edge computing offers a robust and practical solution for global enterprises currently struggling to navigate the complex web of international data sovereignty and strict privacy regulations. With the expansion of regional laws like the General Data Protection Regulation and various localized cybersecurity acts, the ability to silo sensitive information within specific geographic or corporate boundaries has become a major competitive advantage. By deploying edge gateways that categorize and process information locally, companies can ensure that personally identifiable information or proprietary trade secrets never leave the physical premises. Only anonymized, aggregated metadata is allowed to pass through to the broader cloud for long-term trend analysis, which satisfies both regulatory requirements and corporate security mandates. This architectural control simplifies the process of passing rigorous audits, as data lineage is clearly defined and physically constrained to authorized nodes.

The sheer volume of data generated by modern sensor arrays often becomes an unmanageable burden, yet edge systems mitigate this through intelligent information curation and the management of perishable data. In many industrial applications, a significant portion of collected metrics holds immense value for only a brief window—such as the precise temperature of a chemical reaction or the instantaneous pressure in an oil pipeline. If this information is not acted upon immediately, its utility vanishes, making the delay of cloud transmission inherently counterproductive. Edge nodes are programmed to recognize these high-stakes moments, triggering immediate corrective actions or local alerts while discarding the irrelevant background data. This proactive filtering ensures that the broader corporate network is not overwhelmed by an endless stream of low-value metrics, allowing human operators and executive dashboards to focus exclusively on the most critical operational indicators and long-term performance trends.

Advancing Industrial Autonomy via Swarm Intelligence

The emergence of swarm intelligence marks a fundamental shift from simple device-to-hub connectivity to a sophisticated, collaborative environment powered by direct device-to-device communication. Leveraging high-speed, low-latency protocols like 5G and specialized mesh networking, independent robotic units and sensors can now operate as a unified, decentralized collective. This setup allows for true operational autonomy, where machines negotiate tasks and share environmental data in real-time without the need for constant oversight from a central management server. If a single unit in a logistics warehouse identifies an obstruction or experiences a hardware malfunction, it can broadcast this information to its peers instantly. The remaining members of the swarm then recalibrate their paths and redistribute the workload dynamically, ensuring that the overall mission objective is completed with maximum efficiency. This level of self-healing infrastructure significantly reduces downtime and operational costs.

Digital twins have also experienced a massive performance boost when integrated with edge-enabled infrastructure, providing highly accurate virtual replicas that mirror physical assets with unprecedented fidelity. For these virtual models to be effective for predictive maintenance and stress testing, they require a constant stream of site-specific data that reflects the exact current state of the machinery. Edge sensors provide these high-frequency updates, capturing subtle shifts in engine harmonics or structural integrity that generalized cloud models might overlook due to data compression or transmission delays. By synchronizing the digital twin locally, maintenance teams can run complex simulations that account for the specific environmental variables of a particular factory floor. This leads to a precision in forecasting that allows components to be replaced exactly before they fail, rather than relying on broad, often inaccurate, scheduled maintenance cycles. This high-fidelity mirroring ensures that virtual assets are as reliable as their physical counterparts.

Implementing Resilient Architectures for Sustainable Growth

Strategic implementation of edge computing required a shift in how organizational leaders approached hardware procurement and the modernization of legacy industrial systems. Moving beyond the initial pilot phases, successful companies prioritized the deployment of ruggedized edge gateways that could withstand harsh environmental conditions while maintaining high computational throughput. This transition involved auditing existing sensor networks to identify which components could be retrofitted with intelligent controllers and which required a full replacement to support modern 5G connectivity. IT departments worked closely with operational teams to establish standardized protocols that ensured interoperability between diverse hardware vendors, creating a cohesive fabric that supported both old and new technologies. By investing in scalable edge platforms, organizations avoided the pitfalls of proprietary lockdowns, allowing them to remain flexible as the landscape of industrial automation continued to evolve rapidly.

The transition toward edge-centric architectures focused on long-term sustainability by optimizing energy consumption and reducing the environmental footprint of global data transmission. Decision-makers realized that processing data locally was far more energy-efficient than powering the massive infrastructure required to move petabytes of information across continents to hyperscale data centers. This move toward “green computing at the edge” not only lowered operational overhead but also aligned with corporate environmental goals that became increasingly important to stakeholders. Organizations that successfully adopted these strategies found themselves better positioned to handle the data-intensive demands of the late 2020s. They cultivated a culture of continuous improvement, where the insights gained from localized intelligence were used to refine global business strategies. This proactive approach turned raw data into a primary driver of innovation, ensuring that every investment in the Internet of Things provided a measurable and lasting return.