The transition from traditional software engineering to a data-centric paradigm has fundamentally redefined the role of the modern developer from a mere writer of syntax into a sophisticated data architect capable of interpreting vast streams of complex information. In the current landscape, software is no longer constructed based on the intuition of designers or the speculative preferences of stakeholders but is instead engineered through a rigorous analysis of real-world usage patterns and technical performance metrics. Industry data suggests that a vast majority of professional developers have already integrated advanced artificial intelligence tools into their daily workflows, signifying a permanent shift toward data-driven methodologies. This evolution means that the primary challenge for engineering teams is no longer just solving syntax errors but managing the massive influx of telemetry data to ensure that every update or new feature serves a measurable purpose in the broader application ecosystem.

Integrating AI and Predictive Analytics into the Engineering Workflow

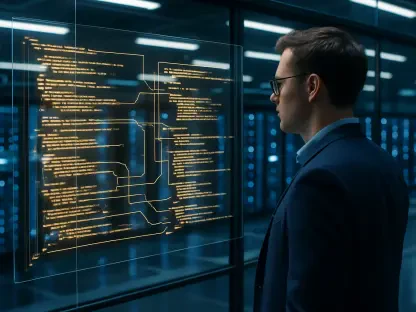

The synergy between artificial intelligence and big data has empowered engineering teams to move beyond reactive troubleshooting to a state of constant, proactive system optimization. By employing predictive analytics, developers can now identify potential system bottlenecks or structural failures before they impact the end user, ensuring a significantly higher standard of code integrity than was possible in previous years. Modern development environments use sophisticated machine learning models to analyze code repositories in real-time, highlighting security vulnerabilities or performance drags during the initial writing phase. This shift toward preventive maintenance reduces the long-term costs associated with technical debt and minimizes the risk of catastrophic system crashes. For professional teams, the integration of these tools has become a baseline requirement for maintaining competitiveness in an industry that demands near-perfect uptime and seamless cross-platform functionality.

By interpreting large-scale datasets, development firms can turn raw information into actionable insights that guide the organic evolution of a product throughout its entire lifecycle. This proactive stance allows for the creation of responsive, tailored solutions that are optimized for actual usage patterns rather than theoretical models created during the planning phase. For example, behavioral analytics can pinpoint the exact moment a user encounters friction in an interface, allowing developers to deploy targeted patches that resolve the issue before it leads to churn. The standard for software quality has shifted from mere functionality to a deep resonance with the user experience, powered by data-driven decision-making. Consequently, the reliance on high-fidelity data has transformed software development into a more scientific discipline, where success is quantified through metrics like load times, conversion rates, and server resource efficiency rather than just the number of features released.

Streamlining the Path to Market Through Data-Driven MVPs

A critical challenge for many tech startups remains the phenomenon of feature bloat, where excessive complexity leads to wasted funding and a significant misalignment with actual market needs. Data analytics plays a vital role in curbing this trend by helping founders and product managers identify the essential features that drive the core value of a new application. Strategic development partners now use historical data and market analysis to advocate for a lean approach, often prioritizing a handful of high-impact functionalities over an exhaustive and expensive roadmap that might never see fruition. This data-backed scrutiny ensures that capital is preserved for scaling rather than being squandered on secondary features that users do not actually want. By analyzing competitor data and user intent, engineering teams can build a leaner, more focused product that addresses a specific market gap, ensuring that the initial launch is both impactful and cost-effective for the business owners.

This methodology allows companies to reach a functional version of their product with remarkable speed, enabling them to gather immediate, high-quality feedback from their first group of early adopters. By focusing on the core architecture or the fundamental plumbing of an application first, developers can effectively manage technical debt and ensure the system is stable enough to handle future scaling operations. Modern analytics tools integrated into the Minimum Viable Product can track every interaction, providing developers with a roadmap for the next development cycle based on reality rather than guesswork. This iterative process, fueled by constant data collection, minimizes the risk of long-term failure by ensuring that the final product resonates with the target audience’s actual behaviors. Ultimately, the move toward data-driven development cycles has reduced the time required to achieve product-market fit, allowing startups to pivot or persevere with a level of confidence.

Specialized Expertise in High-Stakes Development Sectors

Different industries require unique applications of data and strict adherence to compliance standards, which has led to a significant rise in specialized development partners with deep domain expertise. In sectors like fintech and healthcare, the focus has shifted toward audit-ready development where data security and regulatory compliance are considered paramount from the very first day of the project. These specialized firms ensure that logic errors do not escalate into massive legal liabilities by implementing rigorous automated testing and data validation protocols that align with current international standards. By treating data privacy and security as core functional requirements rather than a layer added at the end, these developers provide a foundation of trust that is essential for digital banking, insurance, and medical applications. This specialized approach ensures that the resulting software is not only technically sound but also legally resilient, protecting the company from the high costs of non-compliance.

In contrast, other development partners focus on the intricate intersection of design and data, using behavioral analytics to create functional interfaces that actively drive user engagement and retention. For startups operating in the Software as a Service or artificial intelligence space, the priority often shifts toward integrating large language models and optimizing the significant costs associated with data processing. These partners specialize in fine-tuning API usage and managing cloud infrastructure to ensure that high-performance AI features do not bankrupt the company through inefficient resource consumption. By selecting a partner with specific domain expertise in data science or user experience design, founders can leverage specialized insights to navigate the unique complexities of their particular market niche. This targeted collaboration allows for the development of bespoke features that provide a genuine competitive advantage, ensuring the software stands out in a crowded marketplace.

Managing Scale and Performance in Complex Systems

As software platforms grow in complexity and user volume, managing high-load backends and maintaining peak performance becomes an increasingly data-intensive task for engineering teams. Specialized firms now focus on the technical requirements of high-traffic systems, utilizing real-time telemetry to manage low-latency data transfers and ensure node stability across distributed networks. For products involving hardware or the Internet of Things, data analytics is used to optimize battery life and connectivity, ensuring that the software remains resilient during sudden phases of rapid user growth. These engineering teams analyze thousands of data points every second to balance server loads and prevent downtime, which is critical for maintaining user trust in a 24/7 digital economy. By leveraging predictive scaling algorithms, these experts can anticipate traffic spikes and allocate resources dynamically, ensuring that the application remains responsive even under extreme pressure.

The decision to partner with a specific development firm often involved a strategic trade-off between development speed, total cost, and the specific technical specialization required for the project. Choosing a partner based solely on the lowest budget often led to the accumulation of expensive technical debt and the eventual need for costly code rewrites as the product attempted to scale. Instead, the most effective development teams used data to provide honest, evidence-based feedback, often advising founders on which features to omit to maintain a high-performance, scalable architecture. They recommended focusing on the long-term health of the codebase, ensuring that the product could adapt to future technological shifts without requiring a complete overhaul. To move forward, stakeholders sought out partners who integrated advanced monitoring tools and data visualization into their standard workflow, allowing for a transparent process where every decision was backed by hard data.