The sheer velocity of digital transformation within the global banking sector has effectively shattered the traditional safety nets that once defined financial software stability. While legacy systems were historically protected by long release cycles and meticulous manual verification, the current landscape demands near-instantaneous deployments to meet consumer expectations for mobile and web-based services. This pressure has forced a total reevaluation of how institutions guarantee the integrity of trillions of dollars in daily transactions. As manual oversight becomes a physical impossibility at scale, a fundamental pivot is occurring, steering the industry away from human-led validation toward a future where software systems possess the inherent intelligence to test and verify their own operational logic. This shift represents more than just a technological upgrade; it is a profound reimagining of risk management where the “gatekeeper” is no longer a person with a checklist, but an autonomous agent capable of reasoning through complex financial scenarios in real-time.

The Erosion of Traditional Continuous Testing Frameworks

The DevOps movement previously established continuous testing as the gold standard for software delivery, yet this once-reliable methodology is now struggling to survive in the hyper-active banking environment. Continuous testing relies on predefined, human-written scripts that act as rigid instructions for a machine to follow, checking for specific outcomes based on known inputs. However, as banking applications grow into interconnected webs of microservices and third-party APIs, the sheer volume of changes makes script maintenance a losing battle for quality assurance teams. When a front-end developer updates a single button or a back-end engineer modifies a database schema, hundreds of existing automated tests can break simultaneously. This creates a state of perpetual “script fatigue,” where engineers spend more time fixing the tests themselves than they do investigating actual software defects or developing new financial products.

This reliance on static automation creates a deceptive sense of security that can lead to catastrophic failures in a live production environment. Because traditional scripts only test what they are explicitly told to test, they often miss the nuanced, non-linear bugs that emerge from the interaction of disparate systems. In a bank, where a minor error in a currency conversion algorithm or a latency spike in a payment gateway can have massive regulatory and financial consequences, this lack of flexibility is unacceptable. The industry is reaching a tipping point where the manual effort required to keep test suites functional is exceeding the productive output of the development teams. Consequently, the transition to autonomous models is no longer a matter of choice for large-scale institutions but a necessary evolution to prevent operational gridlock and maintain the high-frequency deployment schedules that modern competition demands.

The Integration of Agentic AI and Predictive Quality Engineering

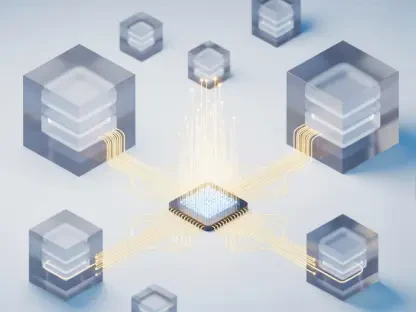

The emergence of Agentic AI marks a departure from instruction-based automation toward a goal-oriented approach where the system understands the “why” behind a test rather than just the “how.” Unlike traditional tools that require a human to map out every possible user path, these autonomous agents utilize advanced machine learning models to explore applications organically. By observing how users interact with banking portals and analyzing the underlying code structure, these systems can generate their own test scenarios dynamically. This means that if a new feature is introduced, the AI does not wait for a developer to write a script; it identifies the change, assesses the potential risk areas, and executes a suite of validations to ensure the entire ecosystem remains stable. This capability transforms testing from a reactive bottleneck into a proactive, self-healing component of the software development lifecycle.

Furthermore, these intelligent agents are capable of processing vast datasets that are far beyond the cognitive reach of human testers. By ingesting historical defect patterns, system logs, and real-time user session data, Agentic AI can build a predictive model of where software vulnerabilities are most likely to manifest. In a complex banking environment involving hybrid-cloud architectures and legacy mainframes, this level of insight is invaluable for identifying hidden dependencies. These autonomous systems do not merely check for binary “pass or fail” results; they look for anomalies in behavior, such as a slight delay in an authentication process or a minor discrepancy in data integrity that might signal a deeper systemic issue. This shift toward predictive quality engineering allows banks to catch catastrophic errors before they even reach the staging environment, significantly reducing the cost of remediation and protecting the institution’s reputation.

Operational Scalability Through Self-Healing Technologies

One of the most significant financial burdens facing modern banks is the ballooning cost of maintaining massive regression test suites that often contain tens of thousands of individual scripts. In many organizations, it is estimated that quality assurance engineers spend up to thirty percent of their time performing “script repair”—the tedious process of updating automated tests to reflect minor changes in the user interface. Autonomous testing platforms address this inefficiency directly through self-healing capabilities, which allow the system to recognize when an element has moved or been renamed and adjust the test parameters accordingly without human intervention. This automation of the mundane ensures that the testing infrastructure remains resilient and functional, even as the application underneath it undergoes rapid and constant iteration by various development squads.

By stripping away the manual labor associated with maintenance, financial institutions can effectively decouple their quality assurance capacity from their headcount. This allows banks to scale their testing efforts in direct proportion to their software complexity without needing to hire an army of scripters. The resulting operational efficiency enables the reallocation of highly skilled human capital toward high-level strategic tasks, such as designing complex risk simulations, enhancing cybersecurity protocols, and performing deep-dive exploratory testing on sensitive financial modules. This transition ensures that the quality assurance department is no longer viewed as a cost center that slows down innovation, but as a high-velocity engine that empowers the bank to release more robust digital features at a pace that matches the speed of the global market.

Navigating the Challenges of Governance and Machine Accuracy

The adoption of autonomous testing in the banking sector introduces a unique set of challenges, particularly regarding the stringent regulatory requirements for transparency and auditability. Financial regulators often require banks to provide clear evidence of how their software was tested and why certain validation steps were taken. As testing moves from human-readable scripts to complex AI models, maintaining a clear “source of truth” becomes more difficult. To combat this, institutions are developing frameworks for “transparent autonomy,” where the AI is required to provide explainable logic for its actions. This involves creating audit logs that detail the reasoning behind machine-generated tests, ensuring that the bank can still prove its compliance with operational resilience standards while benefiting from the speed and thoroughness of an intelligent, autonomous system.

Beyond compliance, the move to autonomy also addresses the inherent limitations of human foresight in detecting complex edge cases. Human testers tend to focus on the most common user journeys—the “happy paths”—and often overlook the rare, high-impact failure points that occur in a globally distributed banking network. Autonomous systems, by contrast, do not suffer from cognitive bias or fatigue; they can simulate millions of varied transaction scenarios, uncovering vulnerabilities in currency rounding, cross-border compliance checks, and multi-factor authentication flows that a human might never consider. This rigorous level of scrutiny is essential for maintaining the absolute integrity of financial data. While the transition requires a robust governance structure to manage the AI, the resulting increase in accuracy and coverage provides a level of security that traditional manual or scripted methods simply cannot replicate.

Redefining the Strategic Role of Quality Professionals

As autonomous systems take over the repetitive tasks of script creation and maintenance, the role of the quality assurance professional is undergoing a profound transformation. The era of the “manual tester” or the “automation scripter” is drawing to a close, replaced by a new generation of quality strategists and orchestrators. These professionals are no longer responsible for writing lines of code to check a login screen; instead, they are tasked with defining the high-level objectives, ethical boundaries, and risk profiles that guide the AI’s behavior. They act as the bridge between business requirements and machine execution, ensuring that the autonomous platform is focusing its vast computational power on the areas of the application that carry the highest financial or regulatory risk for the institution.

This cultural shift requires a move away from the traditional “gatekeeper” mentality and toward a collaborative model where human intuition and machine intelligence work in tandem. While the AI provides the scale, speed, and consistency required to test modern banking ecosystems, the human provides the nuanced understanding of market dynamics, customer sentiment, and institutional goals. This synergy allows for a more holistic approach to quality, where software integrity is treated as a strategic asset rather than a technical checkbox. Moving forward, the most successful banks will be those that successfully navigate this human-machine partnership, empowering their engineers to lead with strategy while the autonomous systems handle the execution. This transition not only improves software quality but also creates a more engaging and high-value career path for technical professionals within the financial services industry.

Establishing a Resilient Future for Financial Verification

The transition toward autonomous testing is not merely an incremental improvement in software development, but a foundational requirement for any financial institution aiming to survive in an era of continuous delivery. To capitalize on this shift, organizations should begin by auditing their existing testing pipelines to identify the specific areas where script fatigue is most prevalent and maintenance costs are highest. Implementing autonomous tools in these “hot spots” allows for immediate gains in efficiency while providing a controlled environment to refine the AI models. Leaders must also prioritize the development of internal governance frameworks that ensure every machine-generated test remains auditable and explainable. This proactive approach to compliance will prevent future regulatory bottlenecks and foster trust in the autonomous systems that are increasingly responsible for the stability of the global financial infrastructure.

Furthermore, the long-term success of this transition depends on a dedicated investment in the upskilling of the technical workforce. As the demand for manual scripting diminishes, the need for professionals who can manage AI-driven workflows and interpret complex data analytics will grow exponentially. Banks must foster a culture of “quality engineering” where testing is integrated into the very fabric of the development process rather than being treated as a separate, final stage. By embracing these changes today, financial institutions can build a self-sustaining quality ecosystem that is capable of evolving alongside the rapidly changing technological landscape. This strategic commitment to autonomy will ultimately result in more resilient systems, faster time-to-market for innovative products, and a significant competitive advantage in a world where software reliability is the ultimate currency.