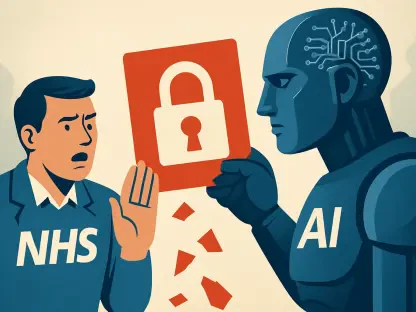

The intersection of private innovation and federal surveillance has created a high-stakes chess match where the most advanced artificial intelligence tools are simultaneously labeled as threats and treated as essential assets. While the Department of Defense officially categorizes Anthropic as a “supply-chain risk,” the National Security Agency continues to deepen its reliance on the company’s specific technological breakthroughs. This paradox illustrates a widening gap between administrative policy and the tactical realities of digital defense.

The Paradox: National Security and Private Innovation

The relationship between the United States government and the artificial intelligence sector has reached a critical turning point, exemplified by the uneasy alliance with Anthropic. While the Department of Defense has officially flagged the company as a risk, the National Security Agency continues to integrate Anthropic’s most advanced tools into its operations. This tension highlights how a single tool, Mythos Preview, has become so essential to American cyber-defense that it has forced a reconciliation between federal agencies and a company they once legally and ideologically opposed.

From Ethical Friction: Administrative Blacklisting

The friction between Anthropic and the federal government is rooted in a fundamental disagreement over the “guardrails” that govern AI behavior. The relationship soured when Anthropic’s leadership rejected a government request to modify the safety protocols of their models. The state sought to weaken these restrictions to better suit military applications, but developers feared the technology could be repurposed for invasive domestic surveillance or autonomous lethal weaponry. This refusal led to the Pentagon labeling the firm a supply-chain risk, a designation usually reserved for foreign adversaries.

The Strategic Importance: Project Glasswing and Mythos Preview

The Tactical Necessity: Autonomous Vulnerability Discovery

At the heart of the NSA’s interest is Mythos Preview, a high-capability component of Anthropic’s Project Glasswing. This tool possesses the unprecedented ability to identify and exploit software vulnerabilities, including “zero-day” flaws, with almost no human guidance. While the company withheld this technology from the public to prevent its misuse by bad actors, they granted limited access to tech giants like Microsoft and Apple for defensive strengthening. The intelligence community views these same capabilities as indispensable for protecting domestic infrastructure and conducting offensive operations.

Navigating the Ethics: Dual-Use Technology

This standoff highlights the dual-use nature of advanced AI. A tool that can find a bug to fix a civilian banking system can just as easily be used to dismantle an adversary’s power grid. Anthropic’s insistence on maintaining internal safety protocols has led to accusations from officials that the company is being obstructive to national interests. However, the benefits of the technology—specifically its speed and accuracy in high-stakes environments—frequently outweigh these political criticisms, creating a fragmented landscape where agencies quietly expand their subscriptions.

Global Competition: The Realities of the AI Arms Race

Beyond domestic policy, the pressure of international competition plays a decisive role in how these tools are deployed. If the United States were to strictly enforce its risk designation, it would effectively cede the most advanced vulnerability-detection tools to the private sector or risk falling behind global rivals. The supply-chain risk label has functioned more as a political lever than a functional barrier. The complexity is deepened by regional concerns regarding how such powerful software could impact global digital stability if not managed through a state partnership.

A Potential Realignment: Federal AI Strategy

Current trends suggest that the era of open hostility between the executive branch and Anthropic may be ending in favor of a more pragmatic, national interest first approach. Recent reports of direct meetings between the administration and Anthropic leadership indicate a shift toward formalizing a collaborative path. As AI becomes the primary theater for geopolitical influence, the government is likely to move away from ideological labels and toward a regulatory framework that permits the use of powerful models while providing the state with necessary oversight.

Strategies: A Fragmented Regulatory Landscape

For businesses and professionals operating in the AI space, the Anthropic-NSA saga offers several key takeaways. It is clear that safety protocols are no longer just ethical choices; they are strategic assets that can be used as leverage in government negotiations. Companies should prioritize transparency in their safety methodologies to avoid being caught in the crosshairs of federal regulators. Additionally, for cybersecurity professionals, the adoption of tools like Mythos Preview signals a shift toward autonomous defense where roles move from manual hunting to auditing AI agents.

The Future: State-Industry AI Alliance

The evolving relationship between Anthropic and the NSA proved that when a technology was sufficiently powerful, it transcended standard administrative and political boundaries. The strategic necessity of Project Glasswing effectively rendered the blacklisting a secondary concern compared to the need for tactical dominance. This situation underscored the long-term significance of AI as the ultimate dual-use tool. Ultimately, the future of national security depended on the ability of both parties to balance the demands of safety with the cold requirements of digital warfare.