The era of telecommunications companies acting as simple conduits for data is rapidly vanishing as the demand for real-time intelligence reshapes the global infrastructure landscape. T-Mobile is currently at the forefront of this shift, fundamentally redefining its identity by transitioning from a provider of mobile minutes and gigabytes into a high-performance physical AI computing platform. This strategic evolution marks a definitive departure from the “dumb pipe” business model, where carriers merely facilitated the movement of data between handheld devices and distant, centralized servers. Today, the company is positioning its expansive 5G network as the sophisticated nervous system of an increasingly automated world. By embedding powerful computing capabilities directly into its network architecture, T-Mobile provides the essential low-latency processing power required to sustain the next generation of robotics and autonomous systems.

The Transformation: From Data Pipe to Intelligence Platform

T-Mobile is now moving beyond traditional connectivity to become a central architect of the AI-driven physical world. This evolution is necessitated by the inherent limitations of old-school cloud computing, which often struggles with the delays caused by moving data over long distances. In the current landscape, the company is leveraging its massive geographic footprint of cell sites to host localized intelligence. This allows the network to function as a distributed brain, capable of making split-second decisions that were previously impossible.

This transition signals a broader industry trend where the value of a network is no longer measured solely by its coverage map, but by its computational density. By integrating high-performance computing at the edge, T-Mobile is creating a environment where AI can interact with the physical world in real time. This strategy ensures that the company remains indispensable as industries ranging from manufacturing to urban management seek to automate their core operations.

The Evolution of Connectivity: The Rise of Edge Computing

To grasp the significance of T-Mobile’s current trajectory, one must consider the historical bottleneck created by centralized data centers. Traditionally, AI applications relied on servers located hundreds of miles away, creating latency that, while negligible for a simple web search, is catastrophic for a self-driving vehicle or a robotic surgical arm. The industry shift toward edge computing—processing data at the point of origin—has become the cornerstone of modern network strategy.

By utilizing its existing infrastructure, T-Mobile is uniquely positioned to solve the latency problem that has long hindered the widespread adoption of real-time AI. This proximity to the end-user ensures that data does not have to travel through multiple layers of the internet before a decision is made. Consequently, the network becomes a reliable foundation for mission-critical applications that require absolute precision and instantaneous feedback.

Building the Infrastructure: The Physical AI Foundation

Integrating Nvidia Blackwell Architecture: Power at the Edge

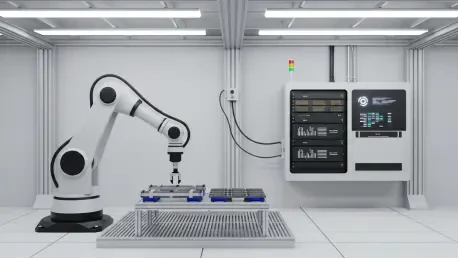

A critical component of T-Mobile’s strategy involves its deep collaboration with technology leaders like Nvidia and Nokia to decentralize computing power. The company has moved forward with installing high-performance Nvidia TX PRO 6000 Blackwell Server Edition GPUs directly onto its cell towers and base stations. This integration allows the network to process complex AI workloads locally, effectively turning every cell site into a mini-data center.

By placing “Blackwell” chips at the tower level, T-Mobile creates near-instantaneous feedback loops. This capability is essential for autonomous systems that require sub-millisecond response times to navigate and interact with their physical surroundings safely. The move effectively migrates the heavy lifting of artificial intelligence away from the device and into the infrastructure itself, providing a level of scalability that individual hardware units cannot match.

Enabling Real-World Applications: The Role of AI-RAN

The transition to an AI-Native Radio Access Network (AI-RAN) is already yielding practical results in trial locations such as San Jose, California. These pilot programs demonstrate how the network can manage real-time traffic light optimization to reduce urban congestion or perform automated inspections of power lines to ensure structural integrity. Furthermore, developers are utilizing advanced tools like Nvidia’s Metropolis VSS 3 Blueprint to conduct rapid video analysis directly on the network, bypassing the need for slow uploads to a central cloud.

This allows for the instant summarization of hours of footage to pinpoint specific security or operational events as they happen. By offloading these heavy computational tasks to the network, companies can deploy cheaper, more streamlined hardware on robots and cameras. These devices no longer need expensive, heat-generating onboard processors, as the “intelligence” is provided by the T-Mobile network itself.

Overcoming Technical Barriers: Navigating Network Limitations

While the benefits are significant, building a physical AI platform involves navigating regional complexities and specific hardware demands. Unlike Wi-Fi or legacy cellular networks, which lack the range and seamless hand-off capabilities required for mobile AI agents, T-Mobile’s 5G Standalone (SA) network provides the consistent “always-on” connectivity required for massive-scale deployment.

A common misunderstanding in the market is that this edge infrastructure will entirely replace traditional data centers. In reality, T-Mobile is building a complementary layer that bridges the gap between the massive storage of the cloud and the immediate, tactical needs of the physical world. This ensure that AI can function reliably across diverse urban and industrial landscapes, maintaining performance even in high-density environments.

The Roadmap: 6G and Native AI Integration

The future of physical AI is inextricably linked to the ongoing evolution of cellular standards. T-Mobile’s 5G Advanced network is currently serving as a critical bridge toward the upcoming 6G era. Expected to begin rolling out within the next few years, 6G is being designed with AI native to its architecture rather than as an add-on feature. This shift will likely involve a move toward even higher frequencies and more sophisticated sensing capabilities.

In this upcoming phase, the network itself will act as a radar to “see” and interpret the environment. Industry experts predict that as AI becomes more autonomous, the demand for T-Mobile’s distributed computing power will grow exponentially. This transition could eventually make network-based AI processing as essential to modern life and industrial productivity as electricity or running water.

Strategic Takeaways: Thriving in an AI-Driven Society

The analysis of T-Mobile’s pivot reveals several major takeaways for businesses and technology leaders. First, the “intelligence” of a system is increasingly moving from the physical device to the network, allowing for more affordable and scalable hardware deployments across various sectors. Second, companies should prioritize 5G Standalone and upcoming 6G standards as the primary catalysts for meaningful industrial automation.

For professionals in logistics, manufacturing, and urban planning, the recommendation is to begin pilot programs that specifically leverage edge computing to reduce operational bottlenecks. Applying this information means shifting focus away from centralized cloud solutions and toward decentralized, low-latency network architectures. Such a shift is necessary to support the real-time decision-making required by modern autonomous agents.

Strategic Outlook: The Future of Network Intelligence

T-Mobile’s evolution into a physical AI platform successfully addressed the critical latency and mobility challenges that had previously stalled the potential of widespread artificial intelligence. By embedding world-class processing power into the very fabric of its network, the company secured its role as an indispensable infrastructure partner rather than a mere utility provider. Businesses should now look toward integrating their local sensing hardware with these distributed AI nodes to minimize capital expenditure on device-side processing. Moving forward, the focus must shift to developing software that can navigate the seamless hand-offs between different edge locations, ensuring that autonomous robots and vehicles maintain a constant stream of intelligence regardless of their movement. This transition established a new benchmark for the industry, where the value of connectivity became inseparable from the capacity to process information at the point of action.