The boundaries between biological intelligence and synthetic processing have blurred significantly as a landmark study reveals how light particles can replicate the complex associative memory of the human brain. This research, appearing in the prestigious journal Physical Review Letters, was spearheaded by a collaborative team of experts from the Institute of Nanotechnology of the National Research Council, the Italian Institute of Technology, and Sapienza University of Rome. By utilizing integrated optical circuits, these scientists have successfully demonstrated that photons are not merely carriers of information but can function as the very architecture of a neural network. This discovery marks a pivotal shift in the trajectory of artificial intelligence, moving the industry away from traditional silicon-based architectures toward a quantum-driven model that processes information with the physical nuance of a living mind. The implications of this development are profound, suggesting that the future of computing lies in the elegant physics of light.

Bridging Quantum Physics and Neural Architecture

Photons as Functional Artificial Neurons: The Neural Connection

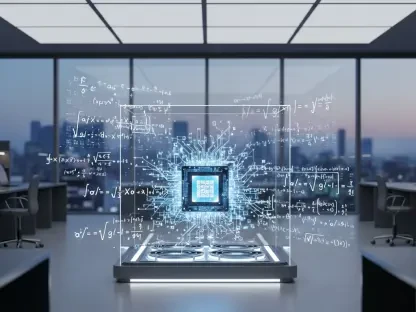

At the heart of this innovation is the successful replication of the Hopfield Network, a foundational computational model in neuroscience that explains how biological systems retrieve complete memories from fragmented or partial cues. In a traditional computer, retrieving data requires a precise address and a linear search through memory banks, whereas the human brain uses association to “fill in the blanks” almost instantaneously. The researchers achieved this by directing identical photons through specialized integrated circuits where the particles do not just pass through but interact through quantum interference. This allows the system to encode complex data patterns within the physical states of the light itself, effectively turning the photons into active computational nodes. Consequently, the hardware does not simply execute an algorithm; it embodies the algorithm through its own physical behavior. This shift represents a move toward truly neuromorphic computing systems that behave more like neurons than transistors.

The transition from electron-based logic to photonic interference offers a fundamental departure from the way information has been processed for the last several decades. Traditional artificial intelligence relies on massive arrays of transistors that switch on and off to represent binary data, a process that is inherently limited by the speed of electrical signals and the heat generated by resistance. In contrast, the photonic approach leverages the quantum phenomenon of superposition, where light particles can exist in multiple states and overlap simultaneously. This allows the integrated circuit to process vast amounts of data in parallel without the bottleneck of sequential logic gates. By utilizing the intrinsic properties of light, the researchers have created a platform where information processing is nearly instantaneous and far more fluid than any silicon-based counterpart could hope to achieve. This represents a leap in efficiency that aligns with the growing needs of complex AI models that require massive amounts of memory.

Quantum Interference: The New Computational Logic

Integrated optical circuits provide the necessary environment for these photonic interactions to take place with high precision and stability. These circuits are designed to guide light through a maze of pathways where interference patterns are used to perform mathematical operations that would normally require thousands of lines of code. Because photons do not interact with each other in the same way that electrons do, they can cross paths without interference unless specifically directed, allowing for incredibly dense and complex network designs. This capability is crucial for simulating the dense connectivity found in the human neocortex, where billions of neurons are linked in a web of simultaneous activity. The research team demonstrated that by controlling the phases and amplitudes of these photons, they could create a robust associative memory that identifies and reconstructs patterns with high fidelity. This provides a tangible roadmap for creating processors that mimic the brain’s internal structure.

Furthermore, the use of integrated photonics allows for the scaling of these quantum systems into compact, usable hardware that can be integrated into existing technological infrastructures. Unlike early quantum computers that required massive cooling units and highly controlled environments, these photonic circuits operate effectively at much more manageable scales, making them a practical candidate for the next generation of artificial intelligence hardware. The ability to encode information into the quantum states of light means that the memory is stored within the very fabric of the circuit’s physical configuration. This removes the need for separate memory and processing units, a distinction that has long slowed down traditional computing architectures. By merging memory and computation into a single photonic event, the research team has unlocked a level of efficiency that was previously considered theoretical, paving the way for machines that can learn and remember with unprecedented speed.

Exploring the Physical Boundaries of Memory

The Threshold of Quantum Coherence: Limits of Information Density

A fascinating aspect of this study involves the natural limits of quantum memory, which closely mirror the cognitive constraints of the human brain. The research team discovered that as long as the data load remains within certain parameters, the system maintains a state known as “quantum coherence.” In this state, the photons behave in a unified, synchronized manner, allowing the system to recall stored patterns with near-perfect accuracy. However, like any physical or biological system, this photonic network is not infinite. It possesses a specific saturation point where the density of information begins to challenge the stability of the quantum states. This finding is significant because it provides a physical explanation for why memory systems have capacity limits. When the system is operating within its optimal range, the retrieval of associative data is seamless, reflecting the way a healthy brain navigates vast amounts of interconnected information.

The researchers observed that the relationship between the number of photons and the complexity of the stored patterns determines the overall reliability of the memory. This means that for the system to remain effective, it must balance the volume of data with the physical resources of the optical circuit. This observation provides scientists with a tangible model to study the mechanics of information density in both artificial and biological systems. By understanding the mathematical thresholds of quantum coherence, engineers can design more resilient AI architectures that avoid the pitfalls of data overload. The study suggests that the key to high-performance AI is not just increasing raw memory capacity but optimizing the coherence of the information being processed. This research effectively bridges the gap between the abstract world of information theory and the physical world of quantum optics, offering new insights into how memory is maintained across different mediums.

Spin Glass Transitions: Understanding Systemic Failure

When the photonic network is pushed beyond its informational capacity, it undergoes a dramatic physical phase transition into a state that physicists refer to as a “spin glass.” In this state, the once-coherent interactions between photons become disordered and chaotic, leading to a complete breakdown in the system’s ability to retrieve or maintain memories. Lead author Gennaro Zanfardino described this phenomenon as a “quantum analogue to cognitive blackout,” where the system becomes so overwhelmed by conflicting signals that it loses its functional identity. This transition is not just a software error but a fundamental change in the physical state of the light within the circuit. It offers a rare window into the mechanics of failure in complex networks, providing a model that could be used to study neurological disorders or systemic crashes in large-scale data infrastructures. The “spin glass” state represents the ultimate limit of associative memory.

This discovery draws heavily on the legacy of complex systems theory, particularly the work of Nobel laureate Giorgio Parisi regarding disordered matter. By observing these transitions in a controlled photonic environment, the Italian research consortium has created a powerful “quantum simulator.” This tool allows scientists to probe complex, disordered systems that are currently impossible for classical supercomputers to model accurately. The ability to simulate these transitions means that researchers can now experiment with ways to prevent or delay system-wide failures in high-density networks. This has applications ranging from stabilizing global financial markets to understanding the onset of degenerative brain diseases where neural connectivity begins to fail. By studying the point where order turns into chaos, the scientific community can develop more robust AI systems that are capable of self-diagnosing and mitigating the risks associated with extreme data processing loads.

Sustainability and the Evolution of Hardware

Solving the AI Energy Crisis: Neuromorphic Solutions

One of the most immediate and practical benefits of this photonic technology is its potential to solve the escalating energy demands of modern artificial intelligence. Traditional data centers currently consume massive amounts of electricity to power silicon processors and maintain the cooling systems required to prevent thermal failure. As AI models continue to grow in size and complexity from 2026 to 2030, this energy consumption is becoming a significant environmental and economic bottleneck. Photonic platforms offer a sustainable alternative because they use light instead of electrical currents to perform calculations. Since light generates far less heat and moves with minimal resistance through integrated circuits, these systems can achieve high-speed computational parallelism with a fraction of the power required by conventional electronics. This shift toward energy-efficient quantum optics is a critical step in ensuring that AI development remains sustainable.

The researchers emphasized that the integration of hardware and algorithmic functions is the true future of the industry. In this new paradigm, the physical structure of the machine is indistinguishable from its intelligence, as the photons themselves perform the logic required for associative memory. This “neuromorphic” approach moves away from the energy-intensive process of moving data back and forth between separate processors and memory chips. Instead, the data is processed in situ, leading to a massive reduction in latency and power usage. For organizations looking to deploy large-scale AI solutions, this technology represents a way to bypass the physical limitations of silicon while drastically lowering their carbon footprint. The adoption of photonic memory circuits could redefine the standards for high-performance computing, making it possible to run sophisticated neural networks on devices that require minimal cooling and power, effectively decentralizing the power of AI.

Actionable Insights for the Future of Quantum AI

This research provided a definitive roadmap for the integration of quantum mechanics into the next generation of artificial intelligence hardware. To capitalize on these findings, developers and engineers focused on creating hybrid systems that combined the speed of photonic associative memory with the versatility of existing electronic frameworks. The transition toward light-based computing required a significant investment in integrated optical manufacturing, yet the long-term benefits in energy savings and processing power justified the initial shift. Scientists utilized these photonic circuits as advanced simulators to decode the complexities of the human brain, leading to breakthroughs in both medical science and machine learning. By embracing the limitations of quantum coherence and the risks of spin glass transitions, the industry developed more resilient and self-aware systems that managed data density with biological precision.

The study ultimately proved that the behavior of light is a viable medium for simulating the most complex organ in the known universe. Organizations moved to implement these neuromorphic architectures in data centers, which resulted in a substantial decrease in global energy consumption related to AI training. The past efforts to optimize silicon reached their peak, making the move to quantum photonics an inevitable step for those seeking to push the boundaries of computational intelligence. By moving memory directly into the physical state of the processor, the research team eliminated the traditional bottlenecks of computer architecture. This advancement served as a catalyst for a new era of technology where the distinction between hardware and software vanished. The successful mimicry of brain-like associative memory through photons established a new standard for how information is stored, retrieved, and protected in an increasingly data-driven world.