The structural engineering profession is currently witnessing a transformative shift as the long-standing reliance on manual spreadsheet verification finally gives way to fully integrated, automated post-processing environments. For decades, the industry has utilized sophisticated Finite Element Analysis (FEA) software to model complex geometries and simulate environmental stresses, yet a surprising gap persisted between the generation of raw data and the final verification against safety codes. This gap, often referred to as the “spreadsheet bottleneck,” has forced engineers to export high-precision digital results into low-tech, manual documents for final compliance checks. As projects in 2026 grow in scale and complexity—ranging from massive offshore wind farms to intricate urban infrastructure—the risks associated with manual data entry have become untenable. The transition currently underway represents the third major technological leap for the field, moving beyond the simple adoption of computer-aided design toward a future where the logic of international design codes is baked directly into the analytical workflow. This evolution is driven not just by a desire for speed, but by a fundamental need for higher precision and more robust risk management in an increasingly demanding regulatory environment.

The Persistence of the Spreadsheet Bottleneck

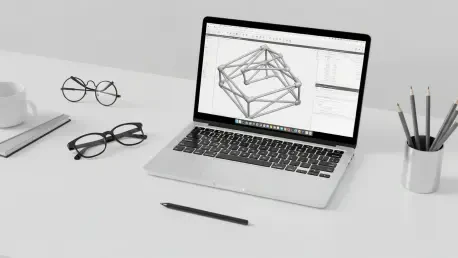

To comprehend the current state of structural engineering, it is necessary to examine the historical trajectory of the tools that define the craft. The profession moved from the era of slide rules and hand calculations to the adoption of computer-aided analysis in the 1970s and 80s, which eventually culminated in the widespread use of Finite Element Analysis. However, a paradoxical stagnation occurred after the modeling phase became digitized. While engineers could simulate thousands of load scenarios, early FEA tools lacked the internal logic required to check those results against specific legal and safety standards, such as Eurocode 3 or AISC requirements. Consequently, firms developed a habit of extracting stress and displacement data into massive, custom-built spreadsheets. This practice persists today because it offers a sense of familiarity and perceived control, even though it disconnects the final safety check from the original digital model. The result is a fragmented workflow where the most critical phase of the project—the verification of structural integrity—is handled by tools that were never designed for complex engineering logic.

This manual approach introduces significant vulnerabilities, particularly through the necessity of “cherry-picking” load combinations. In large-scale maritime or civil projects, an engineer might be confronted with over 300 unique load combinations, representing everything from extreme weather to operational fatigue. Manually checking every single element against every possible combination is a Herculean task that is often mathematically exhausted. To manage this workload, engineers frequently rely on professional judgment to select a subset of “governing” loads they believe will be the most critical for the structure. While this human-centric method has served the industry for years, it remains inherently prone to error. The actual governing load case is often a counterintuitive combination of forces that might be overlooked during a manual selection process, creating a hidden risk that only surfaces during a structural failure. Automation removes this guesswork by exhaustively analyzing every scenario, ensuring that no critical stress point is ignored due to human oversight or time constraints.

Furthermore, the lack of traceability in manual verification creates a significant administrative burden during the audit and certification phases. When a regulatory body or a classification society like DNV or ABS requests evidence for a specific utilization ratio, the engineering team must often navigate a fragile web of interconnected spreadsheet cells. If a single formula is accidentally altered or a data point is copied incorrectly, the entire compliance report may be rendered invalid, leading to costly delays and potential legal liabilities. This process is not only risky but also consumes a disproportionate amount of an engineer’s time, which is spent on formatting tables and verifying cell links rather than on actual design innovation. By moving toward automated environments that maintain a direct link between the FEA results and the final report, firms can ensure that every calculation is traceable, repeatable, and entirely transparent to third-party auditors.

The Capabilities of Automated Post-Processing

The emergence of specialized post-processing tools is fundamentally changing how engineers interpret the structural intent of their models. Unlike traditional software that merely presents raw data, these automated systems can “recognize” the physical characteristics of a structure from a collection of abstract elements and nodes. For instance, advanced software can automatically identify that a series of collinear elements constitutes a single physical beam, allowing it to determine buckling lengths and joint types without manual tagging. In the context of offshore structures, these tools can detect shell fields between stiffeners and extract dimensions to perform complex plate buckling checks according to international standards. This level of automation ensures that the engineer is no longer a data entry clerk but a high-level supervisor who oversees the logic of the system. By integrating design-code logic directly into the software, these tools ensure that every calculation is consistent across the entire engineering team, eliminating the variations that often occur when different engineers use their own custom-built spreadsheets.

The shift toward automation is also a response to the intense economic pressures facing modern engineering firms. As of 2026, the structural engineering software market is experiencing rapid growth, with a significant portion of that investment directed toward post-processing and verification automation. This growth is justified by the massive efficiency gains reported by firms that have abandoned manual methods. Case studies from leading offshore and civil engineering companies indicate that the time required to generate comprehensive compliance reports can be reduced by as much as 70%. In one notable instance, a project that would have traditionally required a team of engineers working for several weeks was completed in just a few days using automated verification tools. These productivity gains allow firms to bid more competitively on large-scale contracts and manage higher volumes of work without a corresponding increase in headcount. The ability to produce thousands of pages of verified documentation almost instantly is no longer a luxury; it is a baseline requirement for competing in the global infrastructure market.

Enhancing Safety and Precision in Modern Design

By eliminating the need for manual sampling and human intuition in load selection, automated systems provide a level of risk mitigation that was previously unattainable. These systems are designed to perform exhaustive checks across all limit states, including ultimate, serviceability, and accidental conditions, for every element in the model. This comprehensive coverage ensures that the “worst-case scenario” is always identified, regardless of how counterintuitive it may seem. This creates a standardized audit trail where every result is directly linked to a specific clause in an engineering manual, providing a level of legal and technical security that spreadsheets cannot match. If a design change is required late in the project—such as increasing a plate thickness or altering a beam section—the software can regenerate the entire compliance report with a single click. This ensures that the documentation always accurately reflects the most current iteration of the design, which is a nearly impossible feat to achieve with manual methods in a fast-paced project environment.

Looking at the current technological landscape of 2026, the integration of automation with digital twins and cloud computing is democratizing access to high-end engineering capabilities. Engineers are now increasingly using real-time sensor data from existing structures, such as bridges or oil rigs, to feed back into FEA models for ongoing structural health monitoring. Automated verification tools can then assess the remaining fatigue life of these structures based on actual usage patterns rather than theoretical estimates, allowing for more targeted maintenance and extended operational lives. While artificial intelligence is beginning to assist with preliminary tasks like checking mesh quality or identifying load paths, the industry continues to rely on the deterministic logic of code-based automation for final safety sign-offs. This balance ensures that while the speed of analysis increases, the legal and ethical responsibility of the engineer remains supported by transparent, rule-based calculations that are easily verified by human experts.

A New Standard for Engineering Excellence

The transition from manual spreadsheets to automated verification environments marked a definitive turning point in the history of structural engineering. It was recognized that the increasing complexity of modern infrastructure projects demanded a level of precision and traceability that traditional methods simply could not provide. The “spreadsheet era” effectively concluded when the risks of human error and the economic costs of manual data management became too great for competitive firms to ignore. By adopting integrated tools like SDC Verifier and similar platforms, the industry moved toward a unified workflow where analysis and verification occurred within the same digital ecosystem. This change was not merely about technological adoption; it represented a fundamental shift in the engineering mindset, prioritizing systemic accuracy and exhaustive calculation over the historical reliance on professional intuition and manual sampling.

As the industry moved forward, the focus shifted from simply completing calculations to optimizing designs for sustainability, material efficiency, and longevity. The time saved through automation was reinvested into exploring more innovative structural solutions that reduced carbon footprints and improved the resilience of the built environment. Leaders in the field who embraced this digital transformation found themselves better equipped to handle the rigorous demands of modern regulatory frameworks and the increasing scale of global infrastructure projects. The actionable path for engineering firms involves the systematic decommissioning of legacy spreadsheets in favor of validated, automated libraries that ensure consistency across all projects. Ultimately, the move toward automation served to elevate the role of the structural engineer, allowing them to focus on high-level problem solving while the software ensured that every bolt, weld, and beam met the highest standards of safety and performance.