Coordination swallowed more engineering hours than coding in many teams, and AI task managers stepped in to claw that time back by moving planning into chat and letting agents do the grunt work once demanded by boards and status meetings. The hidden tax often showed up as “work about work”: grooming tickets that duplicated conversations, writing status notes few people read, and hopping across Jira, email, and chat to chase the same updates. In data-first organizations, where process rigor and cross-team dependencies are heaviest, that load multiplied quickly. The question for a technology review was not whether AI could draft tasks, but whether it could run a reliable coordination layer that changed behavior, not just interfaces.

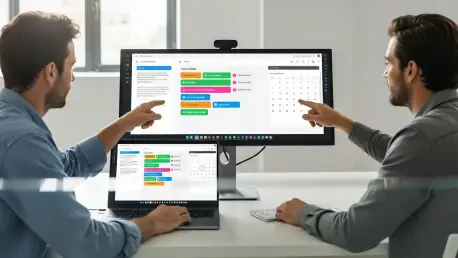

Unlike classic project tools that insisted on a separate destination app, modern AI task managers embedded inside Slack, Teams, and email. That shift mattered because tasks are born in conversation. By turning messages and voice snippets into structured, traceable items with owners and dates, the systems reduced capture friction and kept work in flow. The review tested whether that embedding actually lowered context switching, delivered useful analytics, and survived the messy reality of IT operations, software delivery, and data/BI pipelines.

Body

How It Works: Ambient Capture and Planning

The stack typically paired language models with calendaring, identity, and project APIs. When someone wrote “Fix the pipeline error by Thursday, assign to Alex,” the system parsed intent, entity names, and time expressions, then resolved “Alex” to a directory record and validated Thursday against team calendars. It created the task in the canonical tracker, posted a confirmation back to chat, and attached a source link for auditability. Voice added a transcription layer with domain vocabularies for services, incidents, and repos, which raised accuracy for technical terms.

Planning moved from a static backlog to rolling inference. Models weighed historical throughput, assignee load, and meeting density to suggest deadlines and rebalance work when collisions appeared. Instead of manual triage sessions, contributors approved or edited suggestions inline. This mattered because it shifted effort from push (humans update tools) to pull (agents propose, humans confirm), while preserving a trail that managers could trust.

Core Capabilities and Measurable Performance

Auto creation from chat and voice cut the leak between “we should do X” and “X exists in the system.” In trials, teams reported fewer lost action items and faster ticket creation, largely because no one had to change apps. Smart grouping and prioritization improved schedule realism by using past completion rates and calendar constraints; teams avoided overpromising by aligning due dates with actual capacity, not wishful thinking. Automated reminders replaced the blunt “What’s the status?” with calibrated nudges keyed to proximity of deadlines and risk level, while status digests consolidated updates across tools into short, channel-ready briefs.

Analytics stitched these flows together. By watching carryover, blocked states, and cycle time per assignee, the systems surfaced where work jammed. Emerging custom reporting via MCP-style servers let teams script dashboards that queried both task data and build or incident logs. The performance frame shifted from “tickets closed” to “interruptions prevented and handoffs shortened,” a better match to technical throughput.

What Sets This Wave Apart

Competitors offered task bots, email plug-ins, or generic copilots, but many stalled at parsing and posting. The distinctive move here was deep, bidirectional integration: create, assign, update, and close entirely in-channel, with authoritative writes to Jira, Git-based planners, or ITSM suites. That depth mattered because shadow systems erode trust; when the chat layer was the surface, but the tracker remained the source of truth, adoption held. Another differentiator was continuous analytics rather than weekly reports, enabling rolling plan correction without ceremony.

Products that leaned into domain context stood out. For instance, platforms like Voiset emphasized voice capture with strong entity recognition for incidents and services, while integrating with ticketing ecosystems so a spoken update synced across dashboards. Where rivals needed users to learn bot commands, stronger entrants listened to natural phrasing and handled role-resolved permissions, which translated into lower training overhead and quicker time to value.

Team-Level Effects

IT operations gained the most from automated incident and change hygiene. AI-first dashboards assembled backlogs with dependency callouts, trimmed redundant follow-ups, and kept status synchronized across chat rooms and ITSM records. Mean time to update dropped, which, while not a direct proxy for resolution, freed operators to fix rather than narrate. Software teams traded sprint bookkeeping for lightweight, in-thread approvals: handoffs from dev to QA triggered by merged PRs, summaries pulled from commit messages, and release notes drafted from completed scope.

Data and BI groups benefited from less status churn and tighter fit with analytical workflows. Tasks linked directly to queries, models, and dashboards, so progress bubbled up through the same systems that produced business insights. The result was fewer meetings to “align” and more time for modeling and interpretation, with analytics revealing when ad-hoc requests repeatedly derailed planned work.

Limits, Risks, and Trade-Offs

Three constraints defined the ceiling. First, data quality and hallucinations: misparsed owners or misread dates could cascade into bad schedules. Systems mitigated this with human-in-the-loop confirmation, audit trails, and strict entity validation, but edge cases persisted in noisy threads and uncommon time phrases. Second, integration depth: shallow connectors produced desynchronized states, defeating the purpose; deep integrations demanded security reviews, rate-limit handling, and robust error recovery. Third, privacy and compliance: always-listening agents raised concerns in regulated environments, pushing teams to adopt opt-in channels, role-based access, and redaction policies.

There was also a behavioral cost. If reminders multiplied, notification fatigue set in. The more successful deployments tuned nudges to risk and velocity, elevated exceptions over routine pings, and exposed controls so users could dial intensity up or down. Change management remained decisive: without clear norms—what the agent owns, what humans own—teams reverted to manual updates “just in case,” erasing gains.

Buying Advice

Evaluation hinged on hands-on signal, not demo gloss. Look for end-to-end chat or voice flows that create, assign, and update tasks without opening another app. Verify calendar, email, and identity resolution under real names and aliases. Probe Jira, Git, and ITSM write-backs for idempotency and auditability. Judge overhead reduction by counting interface hops and status pings before and after a pilot. Finally, insist on analytics that expose throughput, carryover, and bottlenecks, with customizable outputs—MCP-style bridges were a strong sign that reporting could adapt to local needs.

Conclusion

AI task managers reduced “work about work” by moving capture, first-pass planning, and follow-ups into the places where conversations already happened, while tying results back to authoritative systems. The unique value came from ambient, bidirectional integration and continuous analytics that reshaped daily behavior, not just UI. For teams in IT, software, and data, the trade favored adoption when safeguards, deep connectors, and tuning against notification fatigue were in place. The practical next steps were to pilot in one high-churn workflow, measure hops and lead time deltas, harden identity and calendar resolution, and only then expand to adjacent teams. On balance, the technology earned a positive verdict: when implemented with rigorous integrations and clear norms, it paid back with fewer interruptions, faster cycles, and decisions grounded in live operational evidence.