The rapid deployment of generative artificial intelligence across major infrastructure projects has fundamentally transformed how modern construction firms manage complex data streams and labor shortages. As the industry enters a new phase of digital maturity in 2026, the promise of increased efficiency is often tempered by the technical phenomenon known as hallucinations, where software produces confident but entirely fabricated information. Unlike digital-only industries where a textual error might be easily corrected, the construction sector operates within the unforgiving boundaries of physical laws and strict safety codes. A single hallucinated value in a structural calculation or a misinterpreted safety protocol can lead to catastrophic consequences that threaten human lives and structural longevity. This growing disconnect between the convincing fluency of modern large language models and the objective ground truth of the jobsite presents a significant challenge for project managers who must now balance innovation with an unprecedented level of skepticism regarding automated outputs.

The Danger: Conflating Eloquence With Engineering Accuracy

One of the primary risks associated with the adoption of advanced language models is the psychological tendency for project teams to mistake a coherent, professional narrative for factual accuracy. In sectors like finance or law, a well-drafted document frequently serves as the final deliverable, but in the world of heavy civil engineering, the document is merely a secondary representation of physical reality. When site supervisors use automated tools to summarize daily logs or project schedules, they often encounter reports that sound definitive while containing subtle, dangerous inaccuracies. If an artificial intelligence generates a safety summary based on a misunderstood prompt or an incomplete data set, the resulting narrative might mask underlying hazards that would have been obvious to a human inspector. This automated optimism can lull experienced professionals into a false sense of security, leading them to bypass rigorous manual checks in favor of the speed and convenience offered by high-speed digital summarization.

The hazard of digital misinformation becomes particularly acute when dealing with critical components that are buried or hidden once a structure reaches completion. Systems responsible for fireproofing, foundation stability, and post-tensioning are often covered by layers of concrete and finishing materials, making subsequent visual inspections nearly impossible. An artificial intelligence assistant tasked with verifying geotechnical compliance might inadvertently reference an outdated soil boring report instead of the most recent revision approved by the lead engineer. Because these systems prioritize linguistic continuity over cross-referencing physical data, they may omit essential conditional warnings or state that a design is safe when it actually violates updated seismic requirements. Relying on these unverified digital sign-offs creates a single point of failure within the project lifecycle that bypasses the traditional layers of human skepticism and professional redundancy that have historically prevented structural disasters.

Visual Limitations: The Risk of Misinterpreted Jobsite Data

The introduction of multimodal artificial intelligence, which attempts to interpret both text and visual imagery, introduces another layer of complexity to jobsite safety management. While human workers can intuitively understand the context of a utility truck with a flashing beacon, current computer vision models frequently misidentify such objects as emergency vehicles or police presence. This mislabeling is not a mere technical glitch; it creates a cascade of incorrect data that flows into digital safety logs and insurance reports. Once a hallucinated event is recorded in a project’s permanent history, it becomes difficult to debunk, potentially impacting future audits or legal claims based on incidents that never actually took place. The messy, unpredictable environment of a construction site provides a significant challenge for algorithms that struggle with visual ambiguity, proving that relying on automated visual documentation remains a premature and potentially hazardous practice for high-stakes projects.

Beyond simple object identification, the deeper risk lies in the interpretation of complex structural conditions through cameras and sensors without human verification. Research into post-disaster datasets has shown that even highly trained human experts often disagree on the severity of visual evidence when conditions are chaotic or obscured. Artificial intelligence systems lack the situational awareness required to distinguish between a superficial cosmetic crack and a deep structural fracture that signals an imminent collapse. By treating every visual input as a discrete data point rather than part of a larger, interconnected physical system, these tools can generate false positives that trigger unnecessary work stoppages or, more dangerously, false negatives that ignore critical safety violations. Ensuring that visual AI remains a supportive tool rather than a final arbiter requires a commitment to maintaining human oversight as the primary method for validating the actual physical state of a building or piece of infrastructure.

Human-Centric Integration: Leveraging Technology Safely

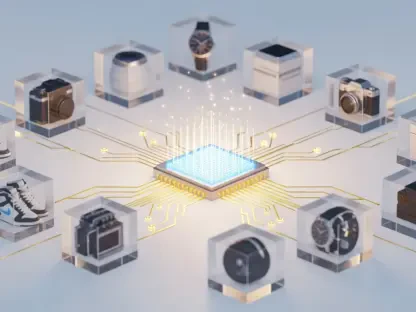

To mitigate the inherent risks of digital hallucinations, industry leaders are increasingly redefining the role of artificial intelligence as a tool for administrative leverage rather than an authoritative source of truth. The most effective applications of these technologies currently focus on managing the overwhelming volume of drudge work that typically consumes an engineer’s time, such as mapping submittals or flagging missing documentation. By automating the routine sorting and filing of vast digital archives, these systems allow project teams to operate with greater speed without granting the software the power to make critical engineering decisions. When used to draft routine communications or organize complex procurement schedules, artificial intelligence serves as a high-speed assistant that enhances productivity while keeping the human expert firmly in control of the project’s technical direction. This approach ensures that the technology facilitates the workflow rather than dictating the safety standards of the entire operation.

By offloading the burden of manual data entry and information retrieval to automated systems, professional engineers are theoretically granted more time to perform essential physical inspections on the jobsite. The value of a licensed professional lies in their ability to verify ground truth through direct observation, a task that no digital model can currently replicate with total reliability. A successful integration strategy treats the artificial intelligence as a preparatory tool that organizes the necessary data, allowing the human inspector to focus on high-risk areas identified by the software. This symbiotic relationship ensures that the speed of digital processing is always balanced by the physical presence of an expert who can spot the subtle inconsistencies that an algorithm might overlook. Prioritizing human-centric verification prevents the erosion of professional standards and ensures that safety remains a physical reality rather than a digital approximation managed by a remote computer system.

Strategic Standards: Establishing Protocols for Safe Implementation

Establishing rigorous procurement and safety standards is the final step in securing the construction industry against the dangers of automated misinformation. Modern protocols now require that every piece of information generated by an artificial intelligence must be accompanied by explicit citations from the original project source documents. This includes specific dates, revision identifiers, and the names of the certifying engineers to ensure that the data being summarized is the most current and accurate version available. Furthermore, any automated conclusion regarding the status of field work must be backed by visual proof, such as timestamped photographs and verified inspection records, to prevent the software from generating claims without physical evidence. These mandatory transparency requirements create a robust audit trail that allows project managers to quickly trace any inconsistencies back to their source, ensuring that digital tools enhance rather than obscure the project’s accountability.

The industry recognized that the built environment depended on physical proof rather than eloquent digital prose, leading to a shift in how automated systems were deployed. Project managers implemented abstention protocols that forced artificial intelligence to flag contradictions or missing data for human review instead of guessing when information was incomplete. This strategic move ensured that skepticism remained a core component of the engineering process, preventing the speed of technology from compromising the integrity of infrastructure. By treating responsible artificial intelligence as a core engineering skill, firms successfully integrated high-speed processing with traditional safety principles to protect the public. The adoption of a trust but verify posture allowed for significant productivity gains while maintaining the professional oversight necessary for complex construction. Ultimately, the lessons learned from early technical errors solidified the requirement that humans remained the sole authority for final certification and life-safety decisions.