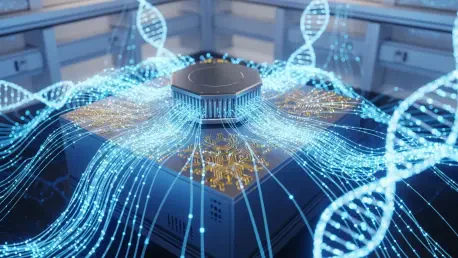

The rapid expansion of genomic databases has created a significant computational bottleneck that modern silicon-based architectures are increasingly unable to manage effectively or efficiently. Researchers from a collaborative group involving the Wellcome Sanger Institute, the University of Oxford, the University of Cambridge, and the University of Melbourne have recently achieved a milestone by encoding a complete genome into a quantum computer for the first time. This specific project utilized the IBM 156-qubit Heron processor to load the entire genetic sequence of the Hepatitis D virus, which consists of approximately 1,700 base pairs. By treating biological information as a series of quantum states, the team demonstrated a potential pathway for resolving the massive processing delays that currently hinder advanced genetic research. This achievement serves as a foundational proof-of-concept for the emerging field of quantum genomics, suggesting that the limitations of traditional digital analysis might soon be bypassed.

Navigating the Complexities of Pangenomic Data Structures

The primary motivation behind this technological shift lies in the inherent difficulty of analyzing pangenomes, which are comprehensive maps representing the genetic diversity of entire populations. Unlike a single reference genome, a pangenome functions like a multidimensional maze of branching paths that represent variations, insertions, and deletions across different individuals. Traditional computing systems frequently struggle with these complex graph structures because they must process each potential variation sequentially, leading to an exponential increase in computational time and energy consumption as datasets grow. The researchers successfully mapped the Hepatitis D virus into a format compatible with quantum gates, proving that biological graphs can be analyzed without the linear constraints of classical logic. This method allowed for the representation of complex genetic relationships in a more integrated manner, providing a glimpse into how researchers might eventually handle more massive datasets.

Quantum computing introduces the concept of qubits, which leverage superposition to represent multiple states and outcomes simultaneously rather than relying on binary bits. In the context of genomics, this capability allows a system to evaluate countless variations within a pangenome graph at the same time, significantly reducing the depth of the search space. While a classical algorithm might take hours to identify a specific genetic marker within a population-wide dataset, a quantum-native approach could theoretically pinpoint the same information in a fraction of the time. This shift is particularly relevant as the industry moves toward personalized medicine, where the ability to compare an individual’s DNA against a vast pangenomic library is crucial for accurate diagnosis. By moving away from the rigid constraints of bit-based logic, the scientific community is exploring a paradigm where biological data is naturally suited to the probabilistic nature of quantum hardware.

Scaling Quantum Solutions for Future Clinical Applications

Despite the successful encoding of a viral genome, the transition to processing full human pangenomes remains a daunting technical challenge that requires significant hardware scaling. Human genomes are millions of times larger than the Hepatitis D sequence, and current quantum processors like the Heron still face issues with coherence and error rates when handling high-density data. Critics within the bioinformatics community remain objectively cautious, noting that a clear quantum advantage has yet to be demonstrated over highly optimized classical algorithms that have been refined for decades. To reach the goal of processing genetic data up to 100 times faster than existing tools, the next phase of development must focus on increasing qubit counts while simultaneously improving error-correction protocols. This technological evolution will likely require a hybrid approach where classical systems manage data pre-processing while quantum circuits handle the most complex alignment tasks.

The successful integration of biological data into quantum hardware established a vital precedent for the future of genomic science and high-speed medical research. Scientists recognized that moving from viral sequences to the vast complexities of human genetics necessitated a rigorous focus on hardware stability and algorithm optimization. The focus shifted toward developing standardized toolkits that allowed global research institutions to experiment with pangenomic graphs in a quantum-native environment. Stakeholders prioritized the creation of robust error-mitigation strategies to ensure that the results of these high-speed analyses remained accurate for clinical use. Ultimately, the collaborative efforts from 2026 to 2028 focused on bridging the gap between theoretical physics and molecular biology. These initiatives provided a roadmap for building scalable platforms that aimed to transform how the global scientific community understood human variation and disease progression.